VionixAI Intelligence Brief

A focused creator policy briefing on AI video, monetization, originality, disclosure and platform trust.

Finally, an AI Website You're Not Embarrassed to Ship

Most AI builders get you 80% there. Then you're on your own, having to stitch together a complicated web of tools: hosting, SEO, payments, backend, deployment, and more.

Readdy takes you all the way:

Full website generated from a single prompt

Hosting, SEO, analytics, and payments built in

24/7 AI agent that handles customer inquiries

Go live in one click. No DevOps. No extra tabs.

$15/month. Everything included. And a site you’re actually proud of.

AI videos can still earn money in 2026, but the easy version of the business is getting weaker. The platforms are not banning AI video as a category. They are tightening the standard around originality, realistic synthetic media, impersonation, reused footage, mass production, copyright safety and audience trust.

The most important policy lesson is simple. AI is acceptable as a production tool when the creator adds real editorial value. AI becomes risky when the video looks like a template, copies another creator, hides realistic synthetic media, uses a cloned voice or face without permission, or publishes low-effort clips at scale.

This briefing uses the latest official platform policy pages and recent reputable reporting available at review time. Where a platform did not publish a fresh policy update in the last month, this newsletter does not pretend otherwise. It reads the current rules as they stand and explains the practical creator risk.

Inside this issue

The new monetization test for AI video

YouTube first strategy for safer AI income

Facebook, Instagram, X and TikTok policy pressure points

A practical publishing checklist before uploading AI videos

The real question is not whether AI is allowed

Creators often ask whether AI videos can monetize. That question is too broad. A more useful question is whether the final video gives the platform a defensible reason to pay for it. YouTube, Facebook, Instagram, X and TikTok all have different monetization systems, but their direction is increasingly similar. They want original work, authentic audience behavior, transparent synthetic media, safe content and a clear creator contribution.

A faceless AI video is not automatically unsafe. An AI voiceover is not automatically demonetized. An AI avatar is not automatically prohibited. The risk begins when the production workflow creates videos that are repetitive, mass-produced, misleading, copied, impersonating someone, or built mostly from reused footage with minor edits.

The creator standard has moved from output volume to editorial value

The safe AI creator is no longer the person who can generate the most clips. The safe creator is the person who can prove judgment, authorship, source discipline, voice, editing choices, permissions and audience usefulness inside every video.

Claude is not just a chatbot anymore. Is your security team ready?

Claude.ai is one thing. Agentic workflows, MCP connections, ungoverned skills taking actions across your data? That's a different conversation — and most security teams aren't equipped for it.

Harmonic Security gives your CISO the visibility and controls to say yes confidently.

YouTube remains the first platform to understand

YouTube is still the priority platform for many AI video creators because it offers the most mature long-form monetization system, Shorts monetization, memberships, fan funding, shopping and a clear Partner Program framework. But YouTube is also where low-effort AI content is most likely to face review pressure.

The current policy direction is not anti-AI. YouTube’s own AI guidance describes AI tools as part of modern creator production. The monetization problem comes from inauthentic content, reused content, deceptive synthetic media and videos that do not provide enough original value for viewers.

A safer YouTube AI video usually has these signals

- Original script, original structure and a clear editorial purpose

- Human narration, meaningful commentary or expert analysis

- AI visuals that support the story rather than replace the story

- Proper disclosure when realistic synthetic content is used

- Licensed music, licensed footage and permission-safe voice use

- Enough variation between videos to avoid a mass-produced template pattern

The phrase creators should pay attention to is inauthentic content. YouTube uses this language for videos that are repetitive, mass-produced or easily replicated at scale. That matters directly for AI video channels because many workflows use the same prompt structure, same voice, same editing rhythm and same template across dozens of uploads.

The most practical fix is not simply adding a human voiceover. A weak voiceover over reused footage may still look thin. A stronger approach is to build each video around a specific claim, a clear explanation, original sequencing, new context, a distinct visual plan and a final edit that could not be mistaken for a generic content farm.

For YouTube-first creators, the safest AI monetization model is educational, documentary, tutorial, analytical or story-driven content where AI helps production but the channel’s value still comes from human judgment.

Facebook and Instagram are rewarding original creator work

Meta’s direction is especially important for Reels creators. Facebook has been clearer about rewarding original creators while reducing reach for unoriginal or duplicative posts. That affects AI video pages because many AI content farms depend on recycled clips, small edits, third-party watermarks, copied captions or synthetic narration added over someone else’s work.

On Facebook, originality does not mean a creator can never use third-party material. It means the creator must add something genuinely new. Fresh information, analysis, meaningful on-screen presentation, substantial improvements to a storyline or strong creative transformation are safer than simply reacting, narrating what is already visible, adding captions or changing speed.

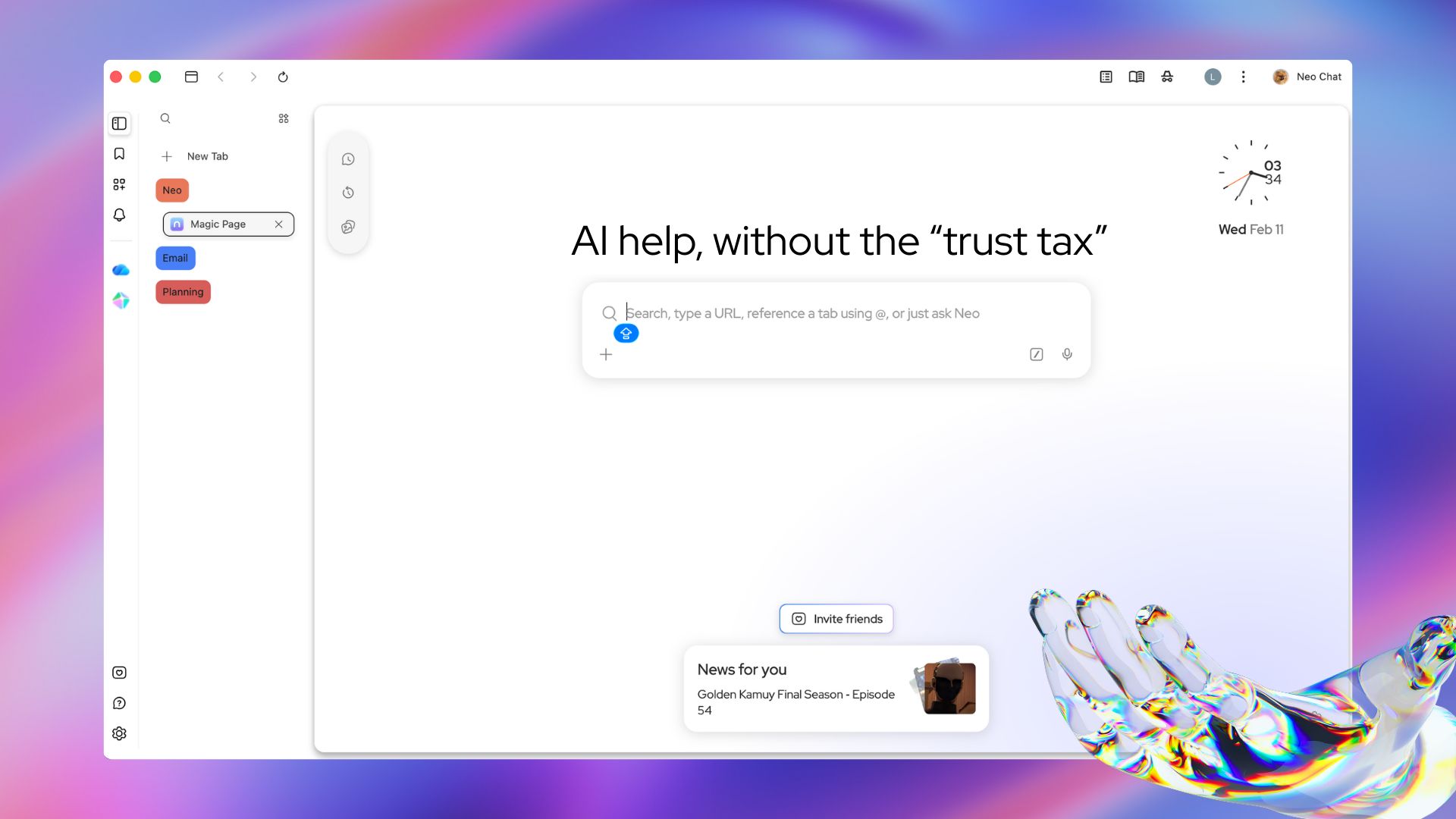

Smarter browsing. Your data never leaves the room.

Most AI tools are a trade — your data for intelligence. Norton Neo breaks that deal. Powerful built-in AI, anti-fingerprinting, VPN, and ad blocking come standard. No setup. No add-ons. No compromises. Search, summarize, and write with AI that works inside your browser and stays there.

For Meta platforms, AI creators should avoid these weak signals

- Reuploading clips with only captions or borders added

- Using AI voiceover to describe what viewers can already see

- Posting the same format repeatedly with minimal topic change

- Using another creator’s video as the real substance of the post

- Building pages that look like anonymous repost networks

- Publishing realistic AI media without enough context for viewers

Instagram’s monetization features are account-specific and product-specific. Subscriptions, gifts, creator marketplace access, bonuses and other tools depend on eligibility, region, account status and compliance. For AI video creators, this makes the Professional Dashboard important. If a monetization product is not visible there, creators should not assume general internet advice applies to their account.

The practical Meta strategy is to treat AI video as a creative layer, not as the whole business. Use AI for previsualization, scripting support, animation, editing assistance, sound cleanup or visual experimentation. Keep the page identity, narrative judgment and publishing standards human-led.

Meta’s quiet warning is about copycat economics

Reels distribution and monetization depend on platform confidence. If a page looks like it is harvesting attention from other creators instead of building original audience value, AI tools will not protect it. They may make the pattern easier for automated systems to detect.

X has a sharper disclosure risk around synthetic conflict media

X is different from YouTube and Meta because much of its creator monetization is tied to conversation, impressions and account-level eligibility. For AI video creators, the current risk is not only whether the video is original. It is whether the account is authentic, eligible, compliant and not using synthetic media in a way that misleads users.

X’s Creator Revenue Sharing requires an active eligible subscription, sufficient organic impressions, verified followers, a supported country and compliance with X rules. The platform also says it rewards high-quality content that drives meaningful interactions and conversation. That means low-quality AI clips designed only to bait replies may be risky even when they gain reach.

The clearest AI-specific monetization rule found in the current X materials concerns armed-conflict videos. Effective March 3, 2026, users who post AI-generated videos of armed conflict without disclosure face suspension from Creator Revenue Sharing for 90 days, with later violations risking permanent suspension of payments. This is a narrow rule, but it reveals the broader direction. Synthetic realism around sensitive events must be labeled clearly.

For X, AI video creators should think like publishers. Do not use synthetic footage to fake events. Do not hide AI involvement in realistic crisis, war, public safety, election, health or finance content. Do not create multiple accounts to amplify the same AI clips.

TikTok is more open to AI but strict on realistic labels

TikTok is one of the most AI-native video platforms because its culture already rewards remixing, fast editing, stylized visuals and experimental formats. But TikTok’s AI policy has a clear transparency requirement. Creators must label AI-generated content that contains realistic images, audio or video.

TikTok also allows creator-applied AI labels and may apply automatic labels when it detects AI-generated or significantly edited content. Content Credentials can also help platforms recognize AI-generated content through metadata. For creators, the practical lesson is that hiding AI use is becoming less reliable. Disclosure should be part of the workflow before publishing, not an emergency fix after a post gains attention.

TikTok AI videos become higher risk when they include

- A real person saying words they did not say

- A real person shown doing something they did not do

- A realistic event that is modified or fabricated

- AI face swaps that substantially change a person’s appearance

- Fake authority sources, crisis scenes or public figure endorsements

- Private adult likenesses without permission or young people’s likenesses

TikTok’s Creator Rewards Program adds another layer. Eligible videos need to be original, high-quality and longer than one minute. TikTok says original content for this program does not include Duets, Stitches or sponsored content. That matters because many creators still build AI accounts around reaction formats or quick remixes. Those formats may grow an audience, but they may not qualify in the same way for direct rewards.

A stronger TikTok AI monetization format

Build one-minute-plus original videos where AI supports the concept, but the creator controls the insight, pacing, topic selection, captioning, disclosure and final edit. Educational explainers, visual storytelling, product demos, software tutorials and original animated narratives are safer than quick AI reposts.

The cross-platform rulebook is becoming one standard

Each platform uses different words, but the practical creator standard is converging. YouTube talks about original and authentic content. Meta talks about original creators and meaningful transformation. TikTok talks about realistic AI labeling and original high-quality videos for rewards. X talks about authenticity, meaningful interaction and synthetic media disclosure in sensitive contexts.

For creators, this means a video should pass five tests before upload. Is it original enough to stand without the AI tool? Is any realistic synthetic media disclosed? Are all voices, faces, footage, music and images permission-safe? Does the video add something new for viewers? Would the channel still look legitimate if a human reviewer opened the newest uploads and watched the most-viewed clips?

The safest AI video business is not fully automated

Full automation may look efficient, but it creates policy risk. A durable AI video channel needs human editorial control, documented permissions, disclosure habits, quality review and a recognizable point of view.

A practical upload checklist for AI video creators

Originality check Make sure the script, structure, sequence, explanation and final edit show a real creator contribution.

Disclosure check Label realistic synthetic media, especially when AI changes a person, voice, event, public issue, health topic, finance topic, election topic or conflict scene.

Rights check Confirm licenses for footage, music, images, voices, celebrity likenesses, avatars and datasets used in production.

Channel pattern check Review the last ten uploads. If they look like the same template with different keywords, rewrite the format before scaling.

The best 2026 strategy is not to build an AI video factory. It is to build a human-led media workflow where AI reduces production friction. Use AI to draft faster, edit faster, visualize ideas, translate content, test thumbnails, clean audio and build animations. Then add the things platforms can reward safely, including judgment, originality, clarity, expertise and audience trust.

AI video can monetize in 2026. But the channel must look like a creator business, not a synthetic content dump. The platforms are giving creators a path, but the path runs through originality, disclosure, compliance and real viewer value.

From the bookshelf

AI 150 Income Ways for Career Survival

A Practical Playbook to Build AI Income From Your Existing Career

AI is changing every career. The safest professionals will not be the ones who ignore it. They will be the ones who learn how to use it wisely.

Yusuf Chowdury maps out 150 practical ways working professionals can layer real AI income on top of the job they already have, without quitting, without coding, and without chasing trends. A calm survival playbook for the next phase of work.

Kindle Edition, by Yusuf Chowdury

About the Author

Yusuf Chowdury

Yusuf Chowdury writes about artificial intelligence, digital strategy and the practical future of work for professionals who want to adapt with clarity rather than panic.

Companion read

AI Shift

A practical companion for professionals trying to understand how AI changes work, income, skills and long-term career positioning.

Source notes

YouTube Help, YouTube channel monetization policies, latest visible inauthentic content update dated July 15, 2025.

YouTube, How Creators Use AI for Content Creation, reviewed May 13, 2026.

TikTok Help Center, About AI-generated content, reviewed May 13, 2026.

TikTok Help Center, Creator Rewards Program guidance and Creator Fund comparison, reviewed May 13, 2026.

Meta Newsroom, Rewarding Original Creators on Facebook, March 13, 2026.

Meta Business Help Center and Instagram Help Center, Partner Monetization Policies and Content Monetization Policies, reviewed May 13, 2026.

X Help Center, Creator Monetization Standards, reviewed May 13, 2026.

X Help Center, Creator Revenue Sharing, including AI-generated armed-conflict disclosure enforcement effective March 3, 2026.

VionixAI.tech Intelligence Brief