VionixAI Intelligence Brief

Edition on practical AI specialist workflows for everyday operators

Most people using AI today are still doing it the wrong way. They open a chatbot, type a question, get a reply, and move on. That is conversation, not work. The people getting real productivity gains from AI are not the ones who type faster prompts. They are the ones who stopped treating AI as a chat window and started treating it as a team of small specialized workers that each handle one job well. This shift is quiet but it is the single biggest separator between people who save ten hours a week with AI and people who still feel like AI is a toy.

A specialist in this sense is not a different model. It is the same AI given a permanent role, a set of rules, a narrow task definition, and a working memory of how you like things done. Think of it like hiring. You would never hire one generalist and ask them to do legal review, graphic design, copywriting, customer support, and financial analysis on the same day. But that is exactly what most people do with AI. The fix is to split that one generalist into many focused specialists, each with its own instructions.

What an AI specialist actually is

Under the hood, every modern AI specialist is built from five components. Understanding these five is the difference between a setup that works reliably and one that breaks every second day. The components are prompt, context, role, memory, and tools. The prompt is what you type in the moment. The context is everything the AI can see when it answers, which includes your previous messages, attached files, and system level instructions. The role is the identity you assign to the AI, which shapes how it thinks and what voice it writes in. The memory is the information it retains across sessions so you do not repeat yourself. The tools are the external abilities it can use such as web search, file reading, code execution, or connecting to your email.

When you set up a specialist properly, you are locking four of these five in place so only the prompt changes day to day. That is where consistency comes from. A generic chat conversation changes all five every time you open it, which is why the same question can give you wildly different answers across sessions.

Why structured AI use outperforms casual chatting

Large language models are probabilistic. That means the same input can produce slightly different outputs because the model samples from a distribution of likely next words. This is not a bug, it is how these systems generalize. But it is also why casual chatting feels inconsistent. When you set up a specialist with a fixed role, fixed rules, and fixed examples, you are narrowing the probability space the model samples from. The outputs become tighter, more predictable, and closer to what you actually want.

There is also a context quality effect. Models behave better when the context is clean and focused. A chat thread that has drifted across ten topics confuses the model because it is pulling patterns from unrelated exchanges. A specialist with a single purpose keeps the context tight, which improves reasoning quality measurably. Researchers call this the context rot problem, where long and messy contexts degrade model output. Specialists solve it structurally.

The seven step setup that works for any specialist

The setup pattern below works whether you are using Claude Projects, ChatGPT custom GPTs, Gemini Gems, or any similar system. It is deliberately platform neutral because the principles are the same everywhere.

Step 1. Define the task narrowly

Not content but specifically LinkedIn posts for a B2B software audience. Not research but specifically competitive pricing research for SaaS products. The narrower the task, the better the specialist performs.

Step 2. Define the outcome

What does success look like. Word count, format, tone, structure, required sections. Write this down as a concrete checklist the AI can match against.

Step 3. Write the standing instructions

These go into the system prompt or custom instructions field. They describe the role, the voice, the audience, and the non negotiable rules. This is the backbone of the specialist.

Step 4. Add your rules as negatives

Models pay strong attention to explicit prohibitions. Writing out what the AI must not do is often more powerful than describing what it should do. Never use clickbait, never include emojis, never write in the first person plural. These hard rules shape output sharply.

Step 5. Give two or three real examples

Few shot examples are the most underused trick in consumer AI. Paste two or three pieces of output that match your exact standard. The model will copy the pattern far more reliably than it will follow abstract instructions.

Step 6. Test with five different inputs

Do not test a new specialist with one example and call it done. Run five varied tasks through it. The weak spots show up only when you push it across a range of inputs.

Step 7. Refine based on the failure modes

Every specialist has quirks. Maybe it keeps adding headers you did not ask for. Maybe it sounds too formal. Add a rule that addresses exactly that. Over three or four iterations, the specialist becomes sharp enough to trust with real work.

Five specialists worth building first

If you are starting from zero, these five cover most of the high value ground for anyone running a business, managing content, or doing knowledge work. They are also simple enough to get right on your first try.

The email writer

Set it up with your voice, typical email length, your signature style, and the kinds of people you usually write to. Feed it three of your past emails as examples. From then on, you paste a rough bullet list of what you want to say and it returns a polished message in your voice.

The content creator

Give it your brand voice, your audience, your content pillars, and examples of past posts. Scope it narrowly to one platform. A LinkedIn specialist and a Twitter specialist should be two different setups, not one combined setup. They will both perform better.

The researcher

Build it with web search enabled and instructions that force source verification, recent date filtering, and a structured output format. Tell it to flag anything uncertain. A researcher specialist saves hours of tab switching for competitive analysis, news scanning, or product comparison.

The data analyst

If your platform supports file uploads and code execution, this one is a multiplier. Upload a spreadsheet, ask questions in plain language, and get back analysis, charts, and summaries. Give it instructions on how you want numbers formatted, what currency to use, and what kinds of insights to prioritize.

The task planner

This one breaks down big goals into sequenced steps. You tell it what you want to accomplish, and it returns a concrete plan with timelines and dependencies. Train it on how you actually work, including your time constraints and the tools you use.

Projects are the missing layer most people skip

Every major AI platform now supports some form of project or workspace. Claude Projects, ChatGPT projects, and Gemini Gems are the common names. A project is a container that holds a specialist, its files, its instructions, and all the conversations you have had with it. This is where consistency compounds. When you run three different projects for three different clients, each one stays clean because the context never leaks between them.

Projects also let you upload reference files that the specialist can pull from automatically. If you are running a content specialist, uploading your brand guidelines, your style guide, and ten past pieces of work means every new piece the AI writes is grounded in your actual standards. This is retrieval augmented generation at the consumer level, and it works remarkably well for any task where you have accumulated reference material over time.

How files change AI accuracy in practice

When an AI has access to your actual documents, the hallucination rate drops sharply. The model no longer has to guess what your pricing structure looks like or how your contracts are worded, because it can read the real version. This matters most in two situations. First, when the task involves specific numbers, names, or policies that the AI cannot know from training data. Second, when consistency with past work matters more than creative freedom.

There is a practical limit. Most platforms cap the total file size or the amount of content the AI can consider at once, which is called the context window. Claude currently offers one of the largest context windows in consumer AI at around two hundred thousand tokens, roughly the length of a medium novel. ChatGPT and Gemini have their own limits which vary by plan. For most beginner workflows these limits are more than enough, but if you start uploading entire books or huge databases you will hit the ceiling and need to be selective about what the AI sees.

Connectors are where workflows stop being manual

Connectors are the bridges that let your AI specialist interact with other tools. Gmail, Google Drive, Notion, Slack, calendars, databases. When a specialist can read your emails, search your drive, and post to your Slack, you stop being the middleman. Instead of copying information from one app to the AI and then from the AI back to another app, the AI handles that movement directly.

The technical mechanism here is usually something called MCP, short for model context protocol, which is an open standard that lets AI systems talk to external tools in a consistent way. You do not need to understand the protocol to use it. You just need to know that when you connect a tool to your AI, the AI can now read from it, write to it, or trigger actions inside it. Start with one connector, usually your email or your notes app, and only add more once you have a workflow that clearly needs it.

The insight most beginners miss

The goal is not to make the AI smarter. The AI is already as smart as it is going to be for this conversation. The goal is to make the task clearer. Every minute you spend improving your instructions, examples, and context saves ten minutes of editing bad output later. People who complain that AI is mediocre are almost always people who gave it mediocre inputs. The model is a mirror of how well you defined the job.

The mistakes that quietly ruin beginner setups

- Giving the AI a role that is too broad. A marketing assistant is vague. A LinkedIn post writer for B2B SaaS founders is specific. The narrower version always performs better.

- Writing instructions in abstract language. Use concrete examples of what you want and what you do not want. Show rather than tell.

- Starting every conversation from scratch. If you find yourself typing the same setup paragraph repeatedly, that paragraph belongs in a project or custom instructions, not in each new chat.

- Ignoring the output and hoping the next try will be better. Every time the AI gives you something wrong, tell it exactly what was wrong. That feedback teaches the specialist over time if your platform supports memory, and it sharpens the current session regardless.

- Building one specialist for everything. Splitting into focused specialists is always better than combining them into a swiss army knife that does ten jobs badly.

- Not testing edge cases. A specialist that works on easy inputs but breaks on hard ones is not production ready. Throw it unusual inputs during setup.

A workflow you can start using today

Open whichever AI platform you already use. Create a new project and name it after one specific task you do repeatedly, such as weekly newsletter writing or client email replies. In the project instructions, write three sections. First, the role, which is one sentence describing what the AI is doing. Second, the standing rules, which is a bulleted list of hard requirements. Third, the examples, which is two or three real pieces of past output that match your standard. Upload any reference documents that help the AI stay grounded in your voice or your facts.

Use the specialist for one week on real tasks. Every time the output falls short, do not correct it manually and move on. Instead, update the project instructions so that specific failure mode never happens again. By the end of the week, your specialist will be noticeably sharper than it was on day one, and you will have spent roughly an hour on setup to save several hours every week going forward.

Where AI still needs human judgment

A specialist is a tool, not a decision maker. Three categories of work still require you to stay in the loop. Anything involving legal, financial, or medical consequences where the cost of being wrong is high and irreversible. Anything involving taste where the standard is subjective and the AI has no way to know your specific preferences without extensive feedback. Anything involving ethics or interpersonal judgment where the right answer depends on context the AI cannot see.

The workflow that works best is AI as the first draft and the human as the editor. The AI handles the volume and the structure. You handle the judgment calls and the final polish. When people try to invert this, using AI to make final decisions and humans to merely execute, the quality drops and the errors accumulate.

Real world applications across different kinds of work

Content operators are using specialist stacks to produce daily social posts, weekly newsletters, and long form articles across multiple brands without voice drift between them. The trick is one specialist per brand, never one specialist trying to handle many brands. Small business owners are using email specialists to handle routine client correspondence in a consistent tone, research specialists to track competitors, and data specialists to analyze sales reports.

Traders and analysts are using research specialists for market scanning, pattern recognition across news cycles, and structured output of trade thesis documents. The specialist does not make the trade decision but it compresses hours of information gathering into minutes of reviewable output. Researchers and knowledge workers are using document specialists trained on their accumulated reading to answer questions against their own knowledge base, which turns years of saved articles and notes into a queryable archive.

The pattern across all of these is the same. The person who wins is not the person with the best AI. It is the person who took the time to define their own work precisely enough that AI could handle the repeatable parts.

The final shift worth making

Stop thinking in prompts and start thinking in systems. A prompt is a one time request that vanishes when the conversation ends. A system is a specialist with rules, examples, files, and memory that keeps working for you next week and next month. The people who build systems get compounding returns from AI. The people who keep typing fresh prompts every time are paying the same setup cost over and over. The tools are ready. The only question is whether you are willing to spend one focused hour setting up something that replaces ten hours of future work.

Source notes

Anthropic, Claude Projects documentation and context window specifications, 2025

OpenAI, Custom GPTs and projects documentation, 2025

Google, Gemini Gems and workspace integration documentation, 2025

Model Context Protocol, official specification and introduction, 2024 and 2025

Anthropic engineering blog, context management and prompt engineering best practices, 2025

MIT Technology Review, coverage on enterprise AI workflow adoption patterns, 2025

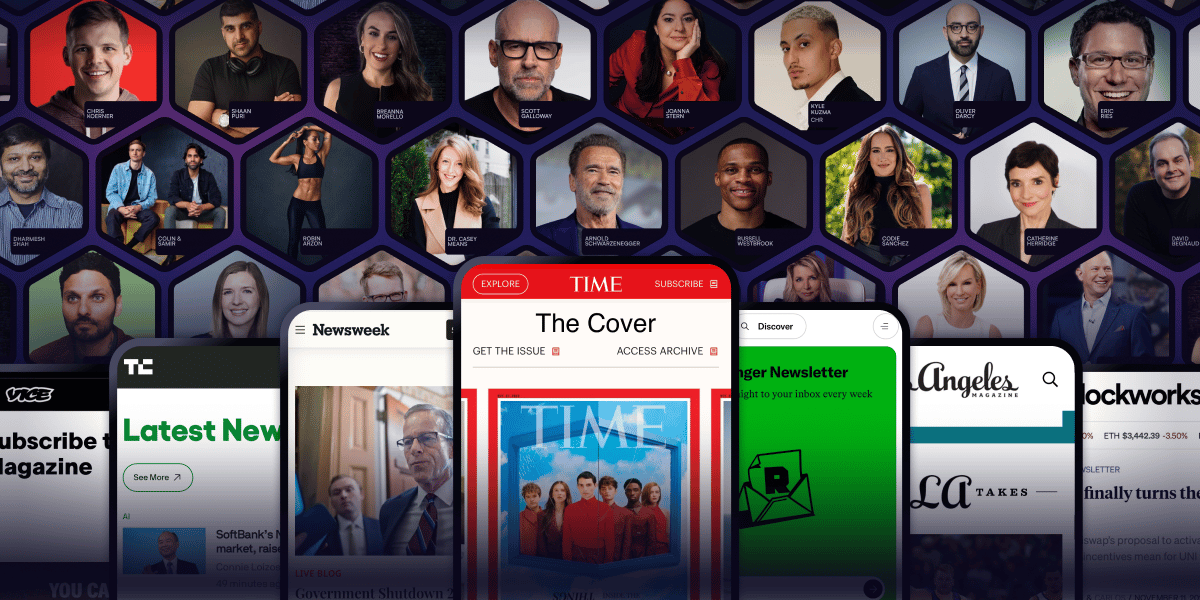

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.