Vionix AI Intelligence Brief

Editorial Memo on AI Infrastructure Economics

Something quiet has happened underneath the AI boom. The story stopped being about models and quietly became a story about capital, silicon, and electricity. The companies racing to define the next decade of intelligence are no longer just building software. They are building the largest industrial infrastructure cycle in modern technology history, and the shape of that buildout is now the single most important variable in how AI gets priced, deployed, and distributed.

Compute used to be a line item. It is now the constraint that defines what AI can do, who can afford to do it, and which countries get a seat at the table. Understanding this shift is no longer a hardware conversation. It is the foundation of every serious decision about AI strategy in 2026 and beyond.

How A Hardware Industry Became The Backbone Of Intelligence

Every major leap in artificial intelligence has been preceded by a leap in compute. The early decades of AI ran on general purpose CPUs designed for sequential logic, which meant neural networks remained a research curiosity for nearly forty years. The shift came when researchers realised that GPUs, originally built for rendering graphics, were extraordinarily good at the parallel matrix math that neural networks depend on. That accident of architecture is the reason deep learning happened when it did.

Nvidia turned that accident into a platform. CUDA, the company's parallel computing framework, became the software layer where almost every modern AI breakthrough was trained. Over twenty years it accumulated libraries, optimisations, and a developer base so deep that switching off CUDA still costs serious engineering effort today. This is why Nvidia ended up holding roughly eighty percent of the AI accelerator market by revenue, a figure that peaked near eighty seven percent in 2024 and is now projected by analysts to drift toward seventy five percent by the end of 2026 as alternatives mature.

The 2020 to 2023 GPU shortage exposed something deeper than a supply chain hiccup. It revealed that compute was no longer a commodity input but a strategic asset. Training costs for frontier models climbed into the hundreds of millions of dollars per run. Scaling laws gave the industry an empirical excuse to keep spending, since each tenfold increase in compute reliably produced measurable capability gains. That conviction is what set the table for the spending cycle now underway.

The Capital Cycle That Has No Modern Precedent

The numbers from the largest American technology companies have moved from impressive to historically unusual. The big four hyperscalers, Amazon, Microsoft, Google, and Meta, are now expected to spend close to seven hundred billion dollars combined on capital expenditure in 2026 according to CNBC reporting on company guidance and analyst estimates. Goldman Sachs projects total hyperscaler capex from 2025 through 2027 will reach roughly 1.15 trillion dollars, more than double the 477 billion spent across 2022 through 2024.

The composition tells the story. Roughly seventy five percent of that 2026 spend is tied directly to AI infrastructure rather than traditional cloud, according to industry trackers including Introl and IEEE ComSoc. Amazon alone has guided to roughly 200 billion dollars in 2026 capex. Alphabet is pointing to up to 185 billion. Meta has signalled spending could approach 100 billion. Microsoft is climbing more slowly but still raising. These are not normal corporate investment patterns. Capital intensity at these companies is now running between forty five and fifty seven percent of revenue, levels that were considered historically implausible for software businesses.

The cash impact is sharp. Morgan Stanley analysts project Amazon will run roughly seventeen billion dollars of negative free cash flow in 2026. Pivotal Research expects Alphabet's free cash flow to fall almost ninety percent this year. Alphabet held a twenty five billion dollar bond sale in November 2025 and quadrupled its long term debt that year to 46.5 billion. Across the big technology issuers, more than one hundred billion dollars of debt was raised in 2025 specifically to fund AI buildouts. Hyperscalers used to be cash machines. They are now becoming capital intensive industrial operators.

Why Every Hyperscaler Is Now Designing Its Own Chips

For most of the AI era, hyperscalers were Nvidia's largest customers. They are now becoming Nvidia's largest threats. Every major player has a custom silicon program in production. Google's Tensor Processing Units have been running internal workloads for nearly a decade and the seventh generation Ironwood arrived in November 2025. Amazon's Trainium 3 delivers 2.52 petaflops of FP8 compute with 144 gigabytes of HBM3e memory and is already being used by Anthropic and OpenAI for training and inference. Microsoft announced Maia 200 in January 2026 with more than 140 billion transistors on TSMC's 3 nanometre process and 216 gigabytes of HBM3e, the largest memory capacity among 2026 class custom accelerators. Meta has rolled out multiple generations of its Meta Training and Inference Accelerator. OpenAI signed more than ten billion dollars in custom chip orders with Broadcom for mass production starting in 2026.

The reasoning is structural. A single Nvidia Blackwell GPU is priced above thirty thousand dollars. A full GB300 NVL72 rack runs more than two million dollars and consumes over one hundred and thirty kilowatts under liquid cooling. When you are deploying tens of thousands of accelerators, owning the silicon means owning the economics. Bloomberg Intelligence projects custom AI accelerators will grow at roughly 44.6 percent compound annual rate through 2033, more than double the projected GPU growth rate over the same period.

The most important shift is that custom silicon is being optimised for inference rather than training. Inference, which is the workload of actually serving a trained model to users, now consumes roughly two thirds of all AI compute according to Deloitte's TMT Predictions 2026. That ratio matters because training is a one time cost while inference scales linearly with usage. As AI products move from millions of users to billions, inference becomes the dominant economic problem, and purpose built inference chips beat general purpose GPUs on cost per token by meaningful margins.

The Collapsing Cost Of A Token And What It Reveals

Behind all this hardware spending sits a counterintuitive fact. The cost of running a model has been falling fast. Per token API pricing across leading providers dropped roughly sixty to eighty percent over the past year, driven by mixture of experts architectures that cut compute per token significantly, by new GPU generations like the H200 and B200 that delivered two to three times the inference throughput of the H100, by API call volumes growing five to ten times as AI moved from experimentation into production, and by aggressive entrants like DeepSeek forcing every provider to match.

Frontier model pricing now reflects this. Claude Opus 4.7 sits at five dollars per million input tokens and twenty five dollars per million output, with up to ninety percent savings via prompt caching and fifty percent off through batch processing. Sonnet 4.6 runs at three dollars input and fifteen output. Haiku 4.5 is at one dollar input and five output. OpenAI's GPT 5.4 family delivered comparable cuts, and Google's Gemini Flash Lite holds the price floor at twenty five cents per million input tokens. The lesson is that even as the absolute capital required to build infrastructure has exploded, the marginal cost of intelligence delivered to a customer has been dropping sharply.

This creates a tension that defines the current market. Hyperscalers are spending more than ever, while their per unit pricing is falling under competitive pressure. The bet they are making is that volume growth, agentic usage, and enterprise lock in will more than compensate. It is an industrial scale wager on demand, and it is the central economic story of AI in 2026.

The Constraint Has Quietly Shifted From Chips To Electrons

For most of the buildout, the binding constraint was GPU supply. That has changed. Power availability is now the variable that decides where data centres get built and how fast capacity comes online. According to the International Energy Agency, the pipeline of conditional offtake agreements between data centre operators and small modular reactor projects grew from twenty five gigawatts at the end of 2024 to forty five gigawatts by early 2026.

Utilities in Virginia, Oregon, and Texas have warned that AI data centre loads are outpacing planned generation. Interconnection queues in many regions stretch five to ten years. Microsoft has moved to recommission the Three Mile Island nuclear facility for 819 megawatts of dedicated capacity. Meta and others are advancing onsite natural gas generation to bypass strained grids. New developments are shifting toward regions with energy surplus, including the UAE, northeast Louisiana, and Alberta.

A modern AI data centre can be built in twelve to twenty four months. Expanding the grid to feed it can take a decade. That gap is now the single most important physical constraint in the entire AI value chain.

Compute As Statecraft

A second front has opened. Governments now treat AI compute as critical national infrastructure rather than as a commercial concern. Global spending on sovereign AI systems is projected to surpass one hundred billion dollars by 2026 according to industry trackers, with the EU AI Continent Action Plan committing roughly two hundred billion euros toward domestic data centre capacity, chip design, and procurement policy. South Korea announced plans with Nvidia and local partners to deploy more than two hundred and sixty thousand GPUs across sovereign clouds and AI factories. India's government emphasised national compute capacity at the India AI Impact Summit in early 2026, including a partnership exploration between OpenAI and Tata Group for local data centre buildout.

The Gulf has gone furthest. Saudi Arabia is building a new AWS region backed by more than 5.3 billion dollars of investment, alongside a separately announced AWS HUMAIN AI Zone and a ten billion dollar Google Cloud joint investment with the Saudi Public Investment Fund. Microsoft committed 15.2 billion dollars to UAE data centre capacity through 2029 and Oracle deployed the region's first OCI Supercluster powered by Nvidia Blackwell GPUs in Abu Dhabi. The strategic logic across these countries is the same. Whoever hosts the compute hosts the inference, and whoever hosts the inference shapes the economic and informational layer that runs on top of it.

Where The Argument Splits

The most contested debate inside the industry is whether the current spend reflects durable demand or accumulating overcapacity. The bull case rests on agentic AI workloads, where models execute long autonomous tasks and consume orders of magnitude more tokens than chat interactions. AI spending across Ramp's customer base grew thirteen times over the past year, and Anthropic moved from one billion in annualised revenue in January 2025 to roughly thirty billion by April 2026 according to reporting in Tech Insider. That is the steepest revenue ramp ever recorded for a software company.

The bear case is that flat rate consumer pricing has been masking unsustainable usage patterns. Anthropic moved aggressively toward per token billing precisely because heavy agentic users on its Max plan were consuming far more compute than the subscription price covered. OpenAI's head of ChatGPT acknowledged on a public podcast that unlimited AI plans may be as economically incoherent as unlimited electricity plans. If a meaningful share of current revenue depends on inflated flat rate consumption that will not survive the move to metered pricing, the demand signal underneath this entire infrastructure cycle is softer than headline numbers suggest.

Both views can be partly true. The buildout is real, the demand is real, and the risk of overshoot is also real. Capital cycles of this magnitude rarely calibrate perfectly to demand in real time.

What Comes After Scarcity

If the buildout succeeds, compute will eventually become abundant rather than scarce. That changes the rules. Margin compression will hit cloud providers first, since differentiation will move from raw GPU access to higher level capabilities including data, fine tuning, agent orchestration, and integration into enterprise workflows. The next architectural frontier is already emerging in research labs. Photonic computing uses light rather than electrons to move data at lower energy cost. Neuromorphic chips mimic the spiking patterns of biological neurons. Marvell acquired Celestial AI for up to 5.5 billion dollars in December 2025, gaining photonic interconnect technology. None of these are mainstream in 2026 but each represents a credible long term pressure on the current architecture.

Edge inference is the second shift. Specialised inference silicon is becoming small enough and efficient enough to run capable models on phones, laptops, vehicles, and industrial equipment without a round trip to a hyperscale data centre. Apple, Qualcomm, and others have built entire product roadmaps around this distribution of intelligence. The implication is that the centre of gravity of AI compute will eventually decentralise, even as the largest training runs remain concentrated in a handful of mega facilities.

For emerging markets including Bangladesh, this matters more than the headline capex numbers suggest. As compute decentralises and inference costs collapse, the meaningful AI work in countries that cannot fund hyperscale buildouts shifts toward application sovereignty. That means adapting global foundation models to local language, legal context, and service needs rather than competing at frontier scale. The infrastructure question for these economies is not whether to build their own GPU clusters but how to position to capture value from cheaper inference, sovereign cloud regions hosted by global providers, and locally tuned models built on open weights. Latam GPT in Latin America and Switzerland's Apertus are early templates of this approach.

The Through Line

The simplest way to read this entire moment is that compute has become the foundational economic layer of artificial intelligence, on the same level that oil was for the industrial economy and silicon was for the personal computing era. The companies and countries that control compute will shape what AI does, who can use it, and how value gets distributed. Capital, silicon, and electricity are now interlocked in a way they never were in any previous technology cycle.

For builders and operators, the practical takeaway is that infrastructure assumptions need to be revisited every twelve to eighteen months. Token costs are falling. Custom silicon is changing inference economics. Power availability is constraining where deployment is possible. Sovereign rules are constraining where data can live. The era of treating compute as an invisible utility has ended. The era of treating compute as a strategic input that shapes product, pricing, and geography has fully begun.

Source Notes

CNBC, Tech AI spending may approach 700 billion dollars this year, February 6 2026. Network World, Hyperscaler backlogs show growing demand for AI infrastructure, April 2026. Dell'Oro Group, Hyperscaler AI Deployments Lift Data Center Capex to Record Highs, September 16 2025. Bloomberg Intelligence custom AI accelerator projections, 2026. CNBC, Nvidia sales are off the charts, but Google, Amazon and others now make their own custom AI chips, November 21 2025. Microsoft Maia 200 announcement, January 26 2026. Reuters and Data Center Dynamics on OpenAI and Broadcom custom silicon partnership, September 2025. International Energy Agency, Data centre electricity use surged in 2025, April 2026. Tom's Hardware on US grid stress and onsite generation, January 5 2026. Anthropic and OpenAI official pricing pages, April 2026. Finout, Anthropic API Pricing in 2026, April 2026. Tech Insider, Anthropic vs OpenAI 2026, April 16 2026. CNBC, AI demand and Anthropic per token billing, April 17 2026. World Economic Forum, How shared infrastructure can enable sovereign AI, February 16 2026. World Economic Forum, AI infrastructure as critical infrastructure, April 2026. TechPolicy Press, Rethinking Sovereign AI as Strategy, March 3 2026. Quaylogic, Global Data and AI Investments in Emerging Markets, February 24 2026. Goldman Sachs, hyperscaler capex projections 2025 to 2027.

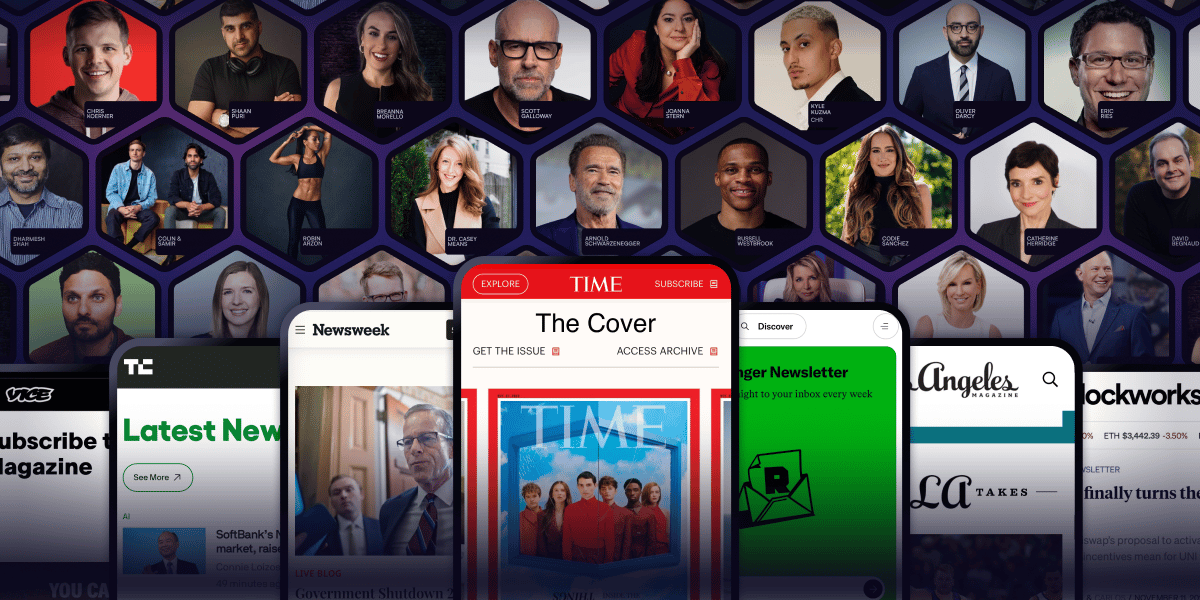

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.